Embodied AI - What is Embodied Artificial Intelligence?

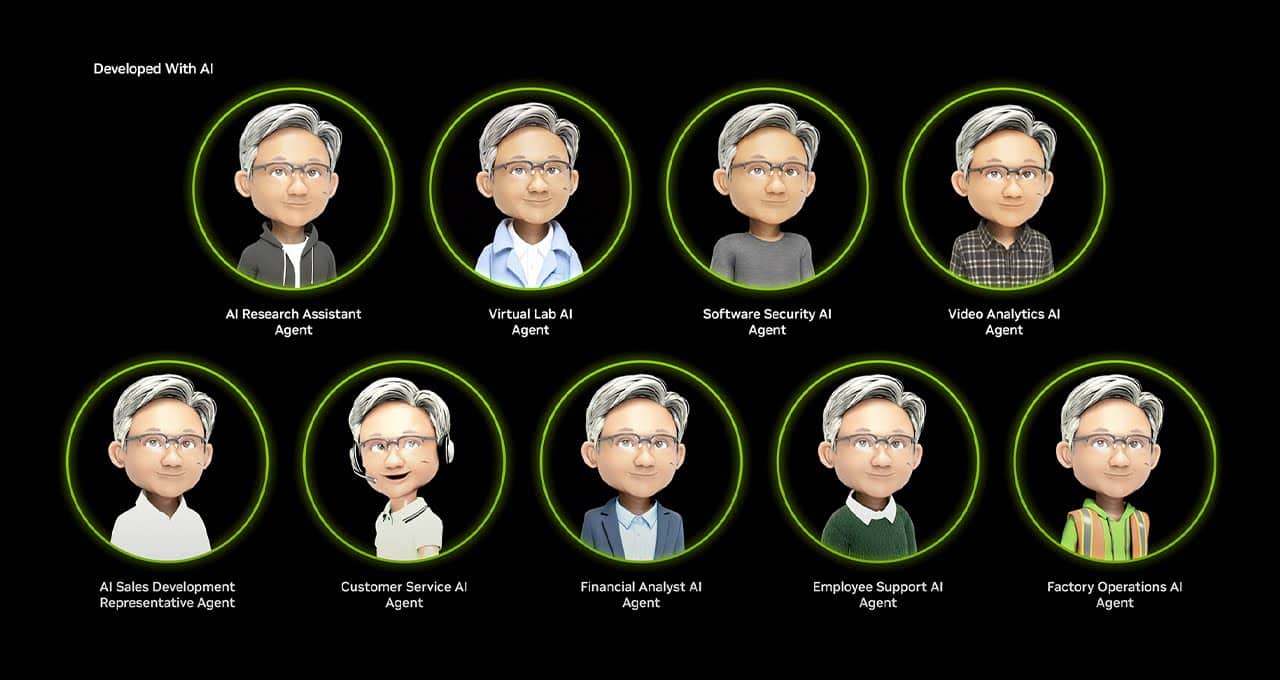

Embodied AI is the field of artificial intelligence (AI) that focuses on creating intelligent agents with a physical or virtual embodiment. Embodied artificial intelligence Agents are usually...

Embodied AI is the field of artificial intelligence (AI) that focuses on creating intelligent agents with a physical or virtual embodiment. Embodied AI Agents can interact with their environment, similar to how humans do. Embodied Artificial Intelligence can enable a robot to interact with its physical environment intelligently. It combines perception, navigation, manipulation, and language understanding to perform tasks effectively. Whether it’s a warehouse robot, a self-driving car, or a virtual character in a video game, embodied AI enhances their capabilities by grounding them in the real world.

Some key points about Embodied Artificial Intelligence:

Definition: Embodied AI involves designing and developing AI systems that control physical entities (such as robots, autonomous vehicles, or virtual avatars) and enable them to perceive their surroundings, move through the world, and take actions based on their sensory input.

Components:

-

- Perception: Embodied AI agents “see” and perceive their environment through vision or other senses.

- Action: They can navigate and interact with their surroundings to achieve specific goals.

- Language Interaction: Some embodied AI systems can hold natural language dialogues grounded in their environment.

- Reasoning: Embodied agents consider long-term consequences and plan their actions.

Challenges of Embodied AI:

-

- Open World: Effective embodied AI agents should be able to handle tasks, objects, and situations that differ significantly from their training data. This concept aligns with the “open set” problem, where agents encounter novel scenarios during deployment.

- Mobile Manipulation: Combining manipulation (interacting with objects) and navigation (moving through space) is essential for solving complex embodied tasks.

- Generative AI: Researchers use generative AI techniques for simulation, data generation, and policy learning in embodied AI systems.

- Language Model Planning: Turning arbitrary language commands into action plans is crucial for making embodied artificial intelligence practical in different scenarios.

In summary, embodied AI bridges the gap between AI algorithms and physical or virtual entities, enabling them to interact intelligently with their environment.

An example of embodied AI

Robotic Navigation and Object Manipulation: a robot that is designed to assist in a warehouse is an example of Embodied AI. This robot needs to navigate through the warehouse, identify specific items on shelves, and manipulate them (e.g., pick up a box and place it elsewhere).

This is a breakdown of how Embodied AI can play an important role in a warehouse scenario:

1- Perception:

-

- The robot uses its sensors (such as cameras, lidar, or depth sensors) to perceive its surroundings. It “sees” the layout of the warehouse, shelves, and objects.

- It processes this sensory input to create a map of the environment and detect objects.

2- Navigation:

-

- The robot plans its path to reach a specific location (e.g. a shelf with a requested item).

- It avoids obstacles, adjusts its trajectory, and ensures collision-free movement.

3- Object Manipulation:

-

- When the robot reaches the desired shelf, it uses its manipulator arm (equipped with grippers) to pick up the requested item.

- It calculates the optimal grasp point, adjusts its grip force, and lifts the object.

4- Language Interaction:

-

- A human operator can communicate with the robot using natural language commands.

- For example, the operator might say, “Robot, fetch the blue box from the third shelf.”

5- Reasoning:

-

- The robot interprets the command, plans its actions, and executes them.

- It considers factors like object weight, stability, and safety during manipulation.

6- Adaptability:

-

- If the warehouse layout changes (new shelves, different items), the robot adapts by re-perceiving the environment and updating its map.